Fundamental scientific methods

| Faculté | Faculté des sciences de la société |

|---|---|

| Département | Département de science politique et relations internationales |

| Professeur(s) | Marco Giugni[1] |

| Cours | Introduction to the methods of political science |

Lectures

Challenges to empirical inference in political science[modifier | modifier le wikicode]

Definition[modifier | modifier le wikicode]

- Inferring: drawing general conclusions from facts, observations and experimental data. Empirical inference is used to establish links and relationships between explanatory factors and to explain on the basis of concrete or empirical evidence.

- Causality: How to judge the cause-effect relationships, this is a central issue, especially in methodology.

- Empirical: drawing general conclusions from empirical evidence.

The three challenges[modifier | modifier le wikicode]

Multi-causality[modifier | modifier le wikicode]

- Almost everything has an impact: it is determined by a multiplicity of possible causes; e. g., political voting behaviour is not determined by one cause, there are several factors. One example is the level of education, but other contextual factors come into play: gender, position in classrooms.

- Each phenomenon has several causes: it is difficult to defend a position that says that a given phenomenon has only one cause. When there are several factors, this complicates the task.

Context Conditionality[modifier | modifier le wikicode]

Linked to benchmarking; there is an institutional channel that allows citizens to participate, this helps explain why people participate in Switzerland, but not in other countries where there is no direct democracy. The causes of a phenomenon can vary from one context to another. The link between social class and voting shows that there is a conditionality of context and modifies the relationship between two factors that can be studied. There are variable effects across the context.

- The effect of almost everything depends on almost everything else.

- The effects of each cause tend to vary across contexts.

Endogeneity[modifier | modifier le wikicode]

Causes and effects influence each other; this is the biggest problem in empirical studies, especially those that follow the observational approach. For example: political interest influences participation (strong correlation between (independent) interest and (dependent) participation). The problem is that causality can be reversed:"what I want to explain can explain what is supposed to explain what I wanted to explain".

It is the difficulty of distinguishing between "what I want to explain" and the factor that explains this phenomenon. The cause becomes an effect and vice versa.

- Almost every cause almost everything else.

- Causes and effects influence each other.

It is often difficult to say in what direction the causality you wish to apply for goes.

The concept of cause[modifier | modifier le wikicode]

Determinant of causal relationship[modifier | modifier le wikicode]

The scientific approach in the social sciences seeks to determine causal relationships.

If C (cause), then E (effect)[modifier | modifier le wikicode]

That's not enough! The relationship between C and E can be worth sometimes or always.

Example - You need to be a little more specific. If we say that if there is a high level of education, we can say that there is a higher level of participation.

It is asserted that if there is C then there is E, there is no univocity of the relationship when the cause and effect relationship must be univocal.

"If C, then (and only then) always E"[modifier | modifier le wikicode]

In this case, there are the four cause-effect relationship characteristics:

- Conditionality: effect provided there is cause.

- Succession: first the cause then the effect.

- Constantial: always "whenever the cause is present, the effect is also observed.

- Univocity: the link is unique.

Important elements are introduced to define what a cause is. still insufficient approach!

For example: if the level of education is high, then and only then is there always higher participation.

However, according to some, in the epistemology of science, there is a missing element in the epistemology of science, which is to say that there must be a genetic link, a link in the production of the causal effect.

"If C, then (and only then) E always produced by C"[modifier | modifier le wikicode]

TRUE. A given effect must not only be correlated with a cause, but the effect must be produced and generated by that cause. The distinction is more philosophical than substantial.

Definition of what a cause is[modifier | modifier le wikicode]

- E is generated by C, so it is not enough to observe a covariation between a cause and an effect, but it is also necessary, to speak of cause, that the effect is generated by the cause.

- The cause must produce the effect. (ex- if you have a high level of education, this generates participation in politics).

Basically, in the context of causal thinking, it belongs only to the theoretical level. When we talk about cause, we are at the purely theoretical and not empirical level. Therefore, it can never be said that empirically, a variation of C produces a variation of E.

Theoretical cause-effect relationships can never be established, only empirically.

If one observes empirically, on the basis of data, that a variation of C and regularly followed by a variation of E, one can say that there is empirical corroboration of a causal hypothesis. A distinction must be made between the theoretical level and the level of causality, which is the empirical level that can only be the one of getting closer by studying covariations.

If we observe empirically, on the basis of data, that a variation of C and regularly followed by a variation of E, we can say that there is an element of In other words, if we observe that a variation of C and regularly followed by a variation of E, there is an element of corroboration, but we still have to eliminate any other possible cause. Empirically, the task is to say that we want to find a covariation, but since there is multicausality, how can we make sure that this covariation really exists and say that we have empirical corroboration of the hypothesis of cause and effect?

Empirical corroboration of a causal relationship[modifier | modifier le wikicode]

If one observes empirically that a variation of X is regularly followed by a variation of Y while keeping constant all the other possible Xs (other causes and explanatory factors), one has a strong scientific corroboration of the hypothesis that X is the cause of Y.

In empirical research, one could never speak of cause and effect; it is important to keep in mind that the cause-and-effect relationship remains in the realm of theory, empirically one can only get closer under certain conditions, finding covariation by keeping the other factors constant.

- covariation between dependent variable (it depends) and independent variable (cause, it does not depend on anything else): both must vary.

- Causal direction: it is necessary to give a direction to causality, problem of endogeneity.

- Logical impossibility: if we have a social class theory that influences political orientation, it is obvious that it is the class that determines. Causality cannot be reversed.

We must remember that in the social sciences, we want to go towards an idea of causal explanation. However, this can never be done, because on the epistemological level the idea of cause and effect lies at the theoretical level. At the empirical level, we can only speak of "variation", but under certain conditions we can corroborate, i. e. empirically verify a causal relationship.

Empirical corroboration of a causal relationship[modifier | modifier le wikicode]

There are three conditions that must be met in order to empirically verify a causal relationship.

Covariation between independent variable and dependent variable[modifier | modifier le wikicode]

- change of the independent variable: this is the cause (X) ex: education

- Variance of the dependent variable: depends on X, it's the effect; for example, participation - what you're supposed to explain must vary! Also the independent variable!

The theoretical causal relationship can be empirically corroborated as much as possible.

Causal direction[modifier | modifier le wikicode]

"I can't tell if it's the X that determines the Y or vice versa." There are three ways of determining the causal direction and approaching the theoretical ideal in order to define a cause-effect relationship.

- manipulating the independent variable: experimental analysis, these are different fundamental scientific methods.

- Temporal succession: there are some variables that logically precede other variables. For example, primary socialization precedes (influence) the voting behaviour of someone who is 30 years old. (The endogeneity problem is solved in this case. In some cases there are obvious successions. At the empirical level this is important to determine a causality direction.

- logical impossibility: social class does not determine political orientation. There are some factors that cannot depend on other factors. There are logical impossibilities that make it possible to establish the causal link.

Control of foreign variables[modifier | modifier le wikicode]

Empirically, one could say that the level of political participation varies according to several variables. This is the whole issue of controlling foreign variables. We can say that 90% of what we do in social science research is to make sure that we can control the effect of explanatory factors that do not interest us. Decisive element: control of the other variables.

- application of the rule "ceteris paribus": one can determine the cause-effect relationship knowing that the rest does not intervene, all other things being equal. There is a relationship between one phenomenon and another, all other things being equal, that is, we must ensure that all other factors can be said to be controlled or, in other words, made constant.

- depends on the logic of the research design: it is my way of controlling the role of other potential causes that depend on the design of the research model. Depends on the logic of the research design; drawing of observational research. How to get around this problem and different depending on the research model. The objective is to control the effect of other potential causes whose effect is to be shown.

Fallacious causal relationship[modifier | modifier le wikicode]

Definition[modifier | modifier le wikicode]

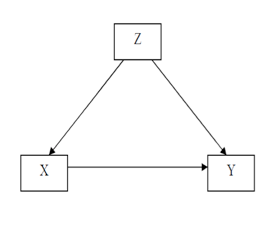

Fallacious causal relation (spirious): it is an apparent causal relation, but non-existence, we thought we had found a causal relation, but in the end there were no causal relations, but only a covariation. It is a covariation between two variables (X; Y) which does not stem from a causal link between X and Y, but depends on the fact that X and Y are influenced by a third variable Z. The variation of Z produces the simultaneous variation of X and Y without there being a causal relation between the two.

There is always the danger that an observed relationship may not be indicative of a causal relationship.

Example of a Fallacious Causal Relationship[modifier | modifier le wikicode]

Exemple 1[modifier | modifier le wikicode]

Voting intentions are influenced by several factors (age, gender, education, social class, family policy orientation, etc.).

A link can be found between education (X) and voting (Y), but ultimately it is the social class (Z) that determines both. Therefore, control variables must be introduced! Otherwise it may be that a non-causal covariation.

Use of television by two candidates (X) in the presidential election to communicate their programme.

We want to explain the vote (Y), but the vote is influenced by several factors that play a role such as education, but it is the social class that generates a multicausality. The idea is to see the effect of exposure to the television campaign on voters. Thus we find a very strong relationship between X and Y. Can we trust the covariation between X and Y?

Voters who followed the television campaign (X) voted more often for one of the two candidates (Y). One could say that exposure to the campaign produces voting, but one must take into account that: older voters (Z) watch more often television, so age influences voting (old right, young left).

Age (Z) influences both exposure to the campaign in television (X), the older ones stay at home and watch TV, and voting for one of the two candidates (Y).

Thus, the covariation between X and Y is not checked, because the variable Z influences the other two variables at the same time. To do this, a control variable must be introduced.

We're going to look at factors that plausibly explain what we want to explain.

Example 2[modifier | modifier le wikicode]

For example, if we look at the number of refrigerators and the level of democracy, it is irrelevant to say that there is a link between the two. However, one could explain that a third variable, which would be the degree of urbanization, generates democracy according to certain theories and at the same time increases the number of refrigerators. Thus, covariation is not a cause-effect relationship, because there is a third variable that influences both and is a cause-effect relationship.

Example 3[modifier | modifier le wikicode]

If political participation (Y) is explained by associative engagement (X), one might think that this relationship is misleading because there is another variable that influences both participation and associative engagement. This variable (Z) could be political participation. Again, there is a misleading relationship; it would be a mistake to conclude from the simple observation of this relationship that there is a causal link between X and Y because the effect of another variable has not been controlled.

Two ways to empirically control a causal statement[modifier | modifier le wikicode]

In the case of problems related to misleading causal relationships (multicausality and endogeneity), there are two ways of empirically controlling causal assertion.

Covariation analysis (observation data)[modifier | modifier le wikicode]

- control: transformation of foreign variables (Z) into constants, so it has no effects, because there is no co-variation, it does not vary. We have to consider the possibilities of misleading relationships. (e. g. one looks at all those who go to university in the highest social class, and then one analyses their vote; therefore the class will not play a role in the analysis). This check can be done manually.

- depuration: statistical control. We make a statistical model, we introduce that the variable X, which interests us, we introduce variables with a strong relationship, after we introduce the other control variables, and if we still find an effect between X and Y, it means that the relationship was not fallacious. This is done by a computer that calculates the relationships between all variables and also considers all covariation relationships. At the end it determines whether or not there is a false correlation. Statistical control is a way of checking variables and empirically testing the cause-and-effect relationship.

Experimental (experimental data)[modifier | modifier le wikicode]

It is the implementation of an experimental method. In the analysis of the covariation we act once we have the data in other words we manipulate the data a priori from the research design, whereas in the experimental method we act before (how do we produce the data?). The experimental method is used to avoid misleading relationships, splitting a sample into two groups and selecting individuals at random. According to the law of large numbers, it can be shown that the two groups are similar, so the only difference will be the independent variable.

Conclusion: It is a reflection on causality; it has been said that the question of causality remains at the theoretical level, we can only try to come closer to this ideal. We must look for a correlation, but we have to rule out hypotheses (against variables), if we don't do it we can fall into a false causal relationship.

Covariation: may be close to a causal relationship, but it is the effect of a Z (unobserved variable) that influences what we want to explain (dependent) and what we want to explain (independent). One variable influences the other two variables at the same time, there is also the possibility of interaction between explanatory variables.

How to control variables to avoid falling into a misleading relationship? There are two ways of distinguishing between them:

- covariation analysis: observed (observational data) after having the data.

- control: we are going to make a foreign variable constant, when we have an assumed relationship between two variables that is made fallacious, because there is a third variable, we take a part of the individuals that corresponds to the second variable, for example, we take people of the same age, for the behavioural relationship between exposure to television and voting. So we controlled that value, made it constant.

- depuration: it's a statistical control. Depuration for all variables.

- experimental analysis: we set up a research design that avoids the existence of Z variables that could affect our analysis.

Four basic scientific methods according to Arend Lipjhart[modifier | modifier le wikicode]

Three or even four methods can be mentioned:

- Experimental method (differentiated from others)

- Statistical method

- Comparative method

- Case Study

These are the three basic methods that make it possible to make a control of the variables also known as "additional explanatory factors". The aim is to test hypotheses and rule out some of them in competition with others.

The first three methods ultimately all have as their main objective to seek cause and effect relationships, and seek to eliminate the noise produced by Z's that could produce a misleading relationship, they have the same goal, but pursue it in different ways. All three make general empirical proposals under the control of all other variables (Z). They want to approach the theoretical cause-effect link between an observed phenomenon and potential causes, there are different degrees of success, we have seen that the experimental method and the one that comes closest to it, on the different explanatory factors. The idea is to arrive at empirical corroboration of causal assertions.

Experimental method[modifier | modifier le wikicode]

Principles[modifier | modifier le wikicode]

- Random assignment: At random, all individuals have the same chance of being in the experimental or control group (in experimental and non-experimental control groups). This idea was born from the principle of the law of large numbers: the random variable is supposed to rule out any other explanation.

- Handling the independent variable (treatment), how are the groups equal? the researcher, at some point, introduces an input into one of the two groups.

Two groups are separated so that the two groups are the same on all dimensions except for one, which is the one on which we want to test the effect. Choosing a person and assigning them randomly means that the two groups are similar. If treatment is then introduced in one of the two groups and a change is observed that is not in the control group, there may be a causal effect leading to cause and effect reflection. It can therefore be concluded that there is a causal effect in the introduced variable.

Types of experiments[modifier | modifier le wikicode]

- Laboratory experiment: These are experiments carried out in laboratories and then the individuals are divided into groups to which stimuli are applied to arrive at an observation of the effect or non-effect. However, individuals are removed from their natural conditions that may lead to results that are artificially artificial under experimental conditions.

- Field experiment: In the natural context, there may be other factors involved. These are experiments that have been applied in political science. The principles remain the same with a random distribution of subjects in two groups; the independent variable is manipulated and then it is determined if there was an effect of the treatment on the experimental group and not on the control group. From then on, we can avoid criticism of the artificiality of laboratory experiments.

- Quasi-experiment: this drawing retains the idea that the researcher manipulates the independent variable, but there is no random assignment of subjects to an experimental and control group. If we do this, it is because we cannot randomly divide into two groups, we are forced to take the groups that actually exist. In other words, the researcher controls the treatment but cannot assign subjects randomly. Sometimes when we're on the field, it's hard to do things differently. Usually this is the method we use, because there is a factor that we can't control.

Example[modifier | modifier le wikicode]

A psychosociologist wanted to test the effects of collective goals on interpersonal relationships. He wanted to see the extent to which, when a group of people are told that there is an objective, conflicting negative stereotypes disappear. It is an immediate experience in natural conditions. He allowed the children to interact with each other, and the children were made to cooperate with each other; the researcher tried to create a collective identity and divided the group into two randomly. He made them play football; we have seen the emergence of rivalries and even negative stereotypes against the other team. He then put the group back together and redefined a common goal that requires cooperation. Afterward he found that these conflicts, these negative stereotypes, these hostilities turned into true cooperation.

Statistical method[modifier | modifier le wikicode]

We cannot make a research drawing that a priori allows us to test if there are correlations, but we have to do it afterwards.

- conceptual manipulation (mathematics) of empirically observed data: we have observational data or we try to control by statistical control, with partial correlations. A distinction is made between experimental and observational data.

- partial correlations: Correlation between two variables after leaving the analysis, i.e. control the effect of other variables that could influence what you want to explain. In other words, it is to make the other Z's as if they were constants following a purification process. It is a logic of variable control: we control the variables that could influence what we would like to explain. In the voting example, anything that could explain the vote should be taken into account, especially if it is thought that this external factor can influence both the vote and the independent variable (media exposure). So we can tell if we're getting close to a causal effect.

What is important is to go beyond a simple bivariate analysis; it is necessary to move towards a multivariate analysis or introduce other variables into the explanatory model.

Comparative method[modifier | modifier le wikicode]

All social science research is comparative in nature. We always compare implicitly or explicitly something. One may wonder about the objectives of the comparison. We have seen that the objectives of any scientific method try to establish general empirical proposals by controlling for all the other variables, but according to some, such as Tilly, there are four objectives for comparison, i.e. the comparison of observation units.

The goals of the comparison according to Charles Tilly are:

- Individualizing – individualised comparison: The aim is to highlight and highlight the characteristics of a given unit of analysis on a certain phenomenon; the idea is to find comparisons, to give a specific case example - in comparative policy studies, the case is the country, one compares countries; the aim is for example to show certain characteristics of the political system in Switzerland compared to other countries. Direct democracy determines political values; we can compare Swiss direct democracy with countries that do not have it. The impact of this characteristic on people can be analyzed. Characterise, individualise and make more specific the characteristics of one country compared to another.

- Generalizing: we compare not to individualize, but to generalize, we include the greatest number of cases, establish general empirical propositions. For some, generalization is one of the two main objectives of the comparative methodology. We study behaviours that we compare, we want to see if the configurations and effects we find in one context are found in another.

- Variation-finding: the aim is to search for systematic variations and test a theory by removing competing theories from the one that we want to highlight. According to Tilly, this is the best way to compare. We're trying to test a causal hypothesis, so we can rule out rival hypotheses.

- Individualised comparison: the aim is to globalize (systemic approach) we include all countries of the world, or all possible units of comparison. The idea is that if one of these units of comparison is removed, the whole system changes. For Professor Giugni, this is not a comparative approach, because we do not compare.

There are different objectives of the comparison; to act on the selection of cases is to compare.

Comparison strategies to look for systematic variations (Przeworski and Teune) A distinction can be made between two comparison strategies, which are logical and methodological approaches to case selection. These are ways of dealing with the issue of causality when we have observational data. A distinction is made between two comparative research designs: analog (similar) and contrast (different). Each of the possibilities has its advantages and disadvantages.

- Comparison between contrasting cases (most different systems design) : we choose cases that are as different as possible

- Comparison of similar cases (most similar systems design) : aims to control all variables except the one that you want to analyze (example France - Switzerland).

Stuart Mill has been thinking about how to analyze this causal link. By selecting different cases to arrive at this ideal, we aim at the cause and effect relationship. But we are not in the context of statistical control. In our case, through case selection, we try to come closer to this ideal.

Stuart Mill distinguishes between two cases:

- The method of agreement (the most different system design):the logic is to choose countries that are very different, but which are similar on a key factor. X is present everywhere. Then we observe Y, then there is a similarity at the level of variable Y that we want to explain. The logic is to say that we are looking for countries where we find the same phenomenon that we want to prove its effect. One observes what happens is that every time there is X, one finds Y. There is this moment is produced by X because it can't be produced by anything else, because the other factors of the countries are different, we find it nowhere else. The logic is to choose very different cases on most of the factors we can think of and then we will see if there is a copresence of the variable we want to explain and the one that explains.

- The method of difference (the most similar system design): we're looking for countries that are as similar as possible. A distinction can be made between positive and negative cases. We will look for cases that have the factor A, B and C and negative cases that also have A, B and C. In positive cases there is a factor present that is not present in the negative case. Thus the vote is present when X is present and absent when X is absent. The conclusion is that X is the cause of the Y effect, because at least the presence of Y cannot have been produced by A, B and C. A, B and C have been made constant.

Example - most different system design: we have three cases (1 ,2, n); one case can be individuals, countries, etc. We have a number of qualities for these cases. These are characteristics of different countries (unemployment, development), X is the independent variable and we want to explain Y. In this comparison logic, the cases are very different, but are similar only in one property. So this variable explains the factor, because it is the only common factor. In Table 2 there are positive and negative cases; these cases are perfectly similar on the highest possible number of attributes, properties and variables, but there is a crucial difference in what is supposed to explain what we want to explain. There is a factor that is not present. In one case there is Y in the other; the difference is attributed to the variable that is not in both cases. This method tries to get closer to the experimental approach, because it is done in such a way that the two groups are as close as possible (like a, b, c), but they differ from the independent variable, most similar system design. Professor Giugni prefers this way because it is closer to the ideal of experimentation. One example is to study students attending the course over two years and change the slides that are given. The final result (the average score) is then compared in both cases.

Example - most similar system design: I compare countries that look like each other (France and Switzerland), and I want to show that the French police repression is stronger, it's the only difference between two countries. Then we see that the radicalisation of social movements is stronger in France. The problem is that the "A" s, i. e. the factors that are similar between the two countries, are never equal, but only resemble each other (this problem is only encountered with the most similar research design method, that's why there are researchers who prefer the other approach, in which we only have to find the differences), we can only get closer to the ideal. In addition, we also find the problem of endogeneity, i. e. knowing which factor influences the other.

Case Study[modifier | modifier le wikicode]

It is a more qualitative method, different from the other three methods because they are more oriented towards quantitative-positive research. There are some distinctions made by Arend Lipihart, there is a kind of cleavage, a distinction between experimental data and observational data.

While the experimental method is based on the idea of many, the comparative method a number of cases that may vary, the case study is based on only one case. However, a case study may be considered that may extend over more than one case, as the border may be flexible.

The case study is only the study of one case, it has no comparison. A case can be many things like a party, a person or a country. We try to study a particular case in depth, but not as extensively as the other three methods that do not study cases so thoroughly.

In other words, the great advantage of the case study is that you can go much deeper into the knowledge of the case. A case study is by definition intensive while the quantitative study is extensive. One method is based on standardisations with the idea of generalising while the other is based on the in-depth interpretation of a specific case.

While the statistical method aims to generalize, the case study by definition does not allow generalization, the objective is another. There are different case studies and modalities for conducting a case study. There are 6 variations:

- the first two to focus on the specific case.

- the other four modalities have an objective to create or generate a theory.

The case study can be used to achieve different goals, on the one hand one wants to know a particular situation better in a descriptive way, on the other hand one can study several cases allowing to generate a theory in the long term.

According to Arend Lipihart, the aim of the research is to test and verify a theory and generalize results.

These two cases do not want to create hypotheses, the idea is to enlighten something about a case:

- atheorical: there is no theory, it's purely descriptive. It is an exploratory research, for example, the study of a new organization, one only wants to know and describe it, in this case one cannot generalize the results, because they are linked to a particular case studied. It is used in situations or cases that have never been studied before.

- interpretative: we use theoretical propositions that exist in the literature, in this qualitative approach the role of literature is less important, we apply an existing generalization to a given case, here the case concerns a specific case, without wanting to create a theory just like the atheory. The objective is not to confirm or invalidate it, but to apply it only. Existing propositions or theories are used and applied to a case; existing generalizations are applied to see if the generalization derives from this specific case. A particular situation is interpreted in the light of an existing theory.

The next four want to create a theory or draw something from the cases. Their objective is to create or generate theories or hypotheses:

- generating assumptions: we want to create hypotheses where there are none. I study a case not only because I am interested in it, but also because I want to make assumptions that I will test elsewhere. Ex: of an organization that engages in politics. We study a given situation because we want hypotheses that we cannot find elsewhere. A case is explored in order to formulate hypotheses. The hypotheses have essentially three sources: existing literature, sociological imagination, exploratory studies.

- confirming a theory: testing a theory on a particular case to confirm this theory, this type of case study is not very useful, because it is not the fact that there is a case that reflects an existing theory that will reinforce the theory that this system is not very useful.

- invalidating a theory: this has much more value, there is a theory that is applied in a specific case, one does not seek to confirm the theory, but to prove that it does not work, one seeks to overturn a theory. This leads us to reflect on the theory, something important in order to develop a theory.

- deviating case: why a case deviates from generalization, this case is very useful when you want to overturn a theory. We are looking for a situation that deviates from an existing situation, the case study seeks to show why this case deviates from generalization.

Annexes[modifier | modifier le wikicode]

- Elisabeth Wood - An Insurgent Path to Democracy: Popular Mobilization, Economic Interests and Regime Transition in South Africa and El Salvador," Comparative Political Studies, October 2001. Translation published in Estudios Centroamericános, No. 641-2, 2002. url: http://cps.sagepub.com/content/34/8/862.short